概述

我们使用 docker compose 来安装 ClickHouse

但我们不是裸装 ClickHouse,实际上我们安装的是 ClickStack。有点儿像 elastic-stack 与 elastic search 的关系 ,但并不完全一样。

ClickStack 是基于 ClickHouse 构建的完整观察性平台,集成了日志、指标、追踪和会话回放功能,提供统一的用户界面和查询能力。因此,ClickStack 是在 ClickHouse 的基础上,结合 HyperDX 提供的前端界面和 OpenTelemetry Collector 实现的完整解决方案。它不仅仅是一个数据库,而是一个集成的观察性平台。

安装步骤参考官方文档:https://clickhouse.com/docs/zh/use-cases/observability/clickstack/getting-started?loc=use-case-observability

安装

克隆 HyperDX 仓库

1git clone https://github.com/hyperdxio/hyperdx.git

2

3cd hyperdx

4

5# switch to the v2 branch

6git checkout v2

根据自身情况修改配置文件 .env

我将 HDX_IMAGE_REPO=docker.hyperdx.io 修改为 HDX_IMAGE_REPO=docker.io 不然镜像拉不下来

1# Used by docker-compose.yml

2HDX_IMAGE_REPO=docker.hyperdx.io

3IMAGE_NAME=ghcr.io/hyperdxio/hyperdx

4IMAGE_NAME_DOCKERHUB=hyperdx/hyperdx

5LOCAL_IMAGE_NAME=ghcr.io/hyperdxio/hyperdx-local

6LOCAL_IMAGE_NAME_DOCKERHUB=hyperdx/hyperdx-local

7ALL_IN_ONE_IMAGE_NAME=ghcr.io/hyperdxio/hyperdx-all-in-one

8ALL_IN_ONE_IMAGE_NAME_DOCKERHUB=hyperdx/hyperdx-all-in-one

9OTEL_COLLECTOR_IMAGE_NAME=ghcr.io/hyperdxio/hyperdx-otel-collector

10OTEL_COLLECTOR_IMAGE_NAME_DOCKERHUB=hyperdx/hyperdx-otel-collector

11CODE_VERSION=2.0.5

12IMAGE_VERSION_SUB_TAG=.0.5

13IMAGE_VERSION=2

14IMAGE_NIGHTLY_TAG=2-nightly

15IMAGE_LATEST_TAG=latest

16

17# Set up domain URLs

18HYPERDX_API_PORT=8000 #optional (should not be taken by other services)

19HYPERDX_APP_PORT=8080

20HYPERDX_APP_URL=http://localhost

21HYPERDX_LOG_LEVEL=debug

22HYPERDX_OPAMP_PORT=4320

23

24# Otel/Clickhouse config

25HYPERDX_OTEL_EXPORTER_CLICKHOUSE_DATABASE=default

docker compose 启动

1docker-compose up -d

Docker-compose 文件如下:

1name: hdx-oss

2services:

3 # ONLY USED FOR DEMO SSL SETUP

4 # nginx:

5 # image: nginx:1.27.3

6 # volumes:

7 # - ./docker/nginx/nginx.conf:/etc/nginx/nginx.conf

8 # - ./docker/nginx/ssl:/etc/nginx/ssl

9 # - .volumes/nginx_logs:/var/log/nginx

10 # ports:

11 # - 80:80

12 # - 443:443

13 # networks:

14 # - internal

15 # depends_on:

16 # - app

17 db:

18 image: mongo:5.0.14-focal

19 volumes:

20 - .volumes/db:/data/db

21 # WARNING: Exposing the database port will make it accessible from outside the container,

22 # potentially allowing unauthorized access. If you uncomment the ports below,

23 # ensure to secure your database (e.g., with strong authentication, proper network rules, and firewalls).

24 # ports:

25 # - 27017:27017

26 networks:

27 - internal

28 otel-collector:

29 image: ${HDX_IMAGE_REPO}/${OTEL_COLLECTOR_IMAGE_NAME_DOCKERHUB}:${IMAGE_VERSION}

30 environment:

31 CLICKHOUSE_ENDPOINT: 'tcp://ch-server:9000?dial_timeout=10s'

32 HYPERDX_OTEL_EXPORTER_CLICKHOUSE_DATABASE: ${HYPERDX_OTEL_EXPORTER_CLICKHOUSE_DATABASE}

33 HYPERDX_LOG_LEVEL: ${HYPERDX_LOG_LEVEL}

34 OPAMP_SERVER_URL: 'http://app:${HYPERDX_OPAMP_PORT}'

35 ports:

36 - '13133:13133' # health_check extension

37 - '24225:24225' # fluentd receiver

38 - '4317:4317' # OTLP gRPC receiver

39 - '4318:4318' # OTLP http receiver

40 - '8888:8888' # metrics extension

41 restart: always

42 networks:

43 - internal

44 depends_on:

45 - ch-server

46 app:

47 image: ${HDX_IMAGE_REPO}/${IMAGE_NAME_DOCKERHUB}:${IMAGE_VERSION}

48 ports:

49 - ${HYPERDX_API_PORT}:${HYPERDX_API_PORT}

50 - ${HYPERDX_APP_PORT}:${HYPERDX_APP_PORT}

51 environment:

52 FRONTEND_URL: ${HYPERDX_APP_URL}:${HYPERDX_APP_PORT}

53 HYPERDX_API_KEY: ${HYPERDX_API_KEY}

54 HYPERDX_API_PORT: ${HYPERDX_API_PORT}

55 HYPERDX_APP_PORT: ${HYPERDX_APP_PORT}

56 HYPERDX_APP_URL: ${HYPERDX_APP_URL}

57 HYPERDX_LOG_LEVEL: ${HYPERDX_LOG_LEVEL}

58 MINER_API_URL: 'http://miner:5123'

59 MONGO_URI: 'mongodb://db:27017/hyperdx'

60 NEXT_PUBLIC_SERVER_URL: http://127.0.0.1:${HYPERDX_API_PORT}

61 OPAMP_PORT: ${HYPERDX_OPAMP_PORT}

62 OTEL_SERVICE_NAME: 'hdx-oss-api'

63 USAGE_STATS_ENABLED: ${USAGE_STATS_ENABLED:-true}

64 DEFAULT_CONNECTIONS:

65 '[{"name":"Local

66 ClickHouse","host":"http://ch-server:8123","username":"default","password":""}]'

67 DEFAULT_SOURCES:

68 '[{"from":{"databaseName":"default","tableName":"otel_logs"},"kind":"log","timestampValueExpression":"TimestampTime","name":"Logs","displayedTimestampValueExpression":"Timestamp","implicitColumnExpression":"Body","serviceNameExpression":"ServiceName","bodyExpression":"Body","eventAttributesExpression":"LogAttributes","resourceAttributesExpression":"ResourceAttributes","defaultTableSelectExpression":"Timestamp,ServiceName,SeverityText,Body","severityTextExpression":"SeverityText","traceIdExpression":"TraceId","spanIdExpression":"SpanId","connection":"Local

69 ClickHouse","traceSourceId":"Traces","sessionSourceId":"Sessions","metricSourceId":"Metrics"},{"from":{"databaseName":"default","tableName":"otel_traces"},"kind":"trace","timestampValueExpression":"Timestamp","name":"Traces","displayedTimestampValueExpression":"Timestamp","implicitColumnExpression":"SpanName","serviceNameExpression":"ServiceName","bodyExpression":"SpanName","eventAttributesExpression":"SpanAttributes","resourceAttributesExpression":"ResourceAttributes","defaultTableSelectExpression":"Timestamp,ServiceName,StatusCode,round(Duration/1e6),SpanName","traceIdExpression":"TraceId","spanIdExpression":"SpanId","durationExpression":"Duration","durationPrecision":9,"parentSpanIdExpression":"ParentSpanId","spanNameExpression":"SpanName","spanKindExpression":"SpanKind","statusCodeExpression":"StatusCode","statusMessageExpression":"StatusMessage","connection":"Local

70 ClickHouse","logSourceId":"Logs","sessionSourceId":"Sessions","metricSourceId":"Metrics"},{"from":{"databaseName":"default","tableName":""},"kind":"metric","timestampValueExpression":"TimeUnix","name":"Metrics","resourceAttributesExpression":"ResourceAttributes","metricTables":{"gauge":"otel_metrics_gauge","histogram":"otel_metrics_histogram","sum":"otel_metrics_sum","_id":"682586a8b1f81924e628e808","id":"682586a8b1f81924e628e808"},"connection":"Local

71 ClickHouse","logSourceId":"Logs","traceSourceId":"Traces","sessionSourceId":"Sessions"},{"from":{"databaseName":"default","tableName":"hyperdx_sessions"},"kind":"session","timestampValueExpression":"TimestampTime","name":"Sessions","displayedTimestampValueExpression":"Timestamp","implicitColumnExpression":"Body","serviceNameExpression":"ServiceName","bodyExpression":"Body","eventAttributesExpression":"LogAttributes","resourceAttributesExpression":"ResourceAttributes","defaultTableSelectExpression":"Timestamp,ServiceName,SeverityText,Body","severityTextExpression":"SeverityText","traceIdExpression":"TraceId","spanIdExpression":"SpanId","connection":"Local

72 ClickHouse","logSourceId":"Logs","traceSourceId":"Traces","metricSourceId":"Metrics"}]'

73 networks:

74 - internal

75 depends_on:

76 - ch-server

77 - db

78 ch-server:

79 image: clickhouse/clickhouse-server:24-alpine

80 # WARNING: Exposing the database port will make it accessible from outside the container,

81 # potentially allowing unauthorized access. If you uncomment the ports below,

82 # ensure to secure your database (e.g., with strong authentication, proper network rules, and firewalls).

83 ports:

84 - 8123:8123 # http api

85 - 9050:9000 # native

86 # environment:

87 # default settings

88 # CLICKHOUSE_DEFAULT_ACCESS_MANAGEMENT: 1

89 volumes:

90 - ./docker/clickhouse/local/config.xml:/etc/clickhouse-server/config.xml

91 - ./docker/clickhouse/local/users.xml:/etc/clickhouse-server/users.xml

92 - ./empty.xml:/etc/clickhouse-server/users.d/default-password.xml

93 - .volumes/ch_data:/var/lib/clickhouse

94 - .volumes/ch_logs:/var/log/clickhouse-server

95 restart: on-failure

96 networks:

97 - internal

98networks:

99 internal:

注意:environment 部分我注释掉了,另外 加了一行:./empty.xml:/etc/clickhouse-server/users.d/default-password.xml 作用是解决 clickhouse 连接异常的问题。

通过 /data/clickhouse/hyperdx/docker/clickhouse/local/users.xml 可以看到 clickhouse 的账户信息:

1<?xml version="1.0"?>

2<clickhouse>

3 <profiles>

4 <default>

5 <max_memory_usage>10000000000</max_memory_usage>

6 <use_uncompressed_cache>0</use_uncompressed_cache>

7 <load_balancing>in_order</load_balancing>

8 <log_queries>1</log_queries>

9 </default>

10 </profiles>

11

12 <users>

13 <default>

14 <password_sha256_hex>2d964690ad5ac2d2f78bebadc30895bc519969ffcef4d3c9e7ff04ee1c765d96</password_sha256_hex>

15 <profile>default</profile>

16 <networks>

17 <ip>::/0</ip>

18 </networks>

19 <quota>default</quota>

20 </default>

21 <api>

22 <password>api</password>

23 <profile>default</profile>

24 <networks>

25 <ip>::/0</ip>

26 </networks>

27 <quota>default</quota>

28 </api>

29 <worker>

30 <password>worker</password>

31 <profile>default</profile>

32 <networks>

33 <ip>::/0</ip>

34 </networks>

35 <quota>default</quota>

36 </worker>

37 </users>

38

39 <quotas>

40 <default>

41 <interval>

42 <duration>3600</duration>

43 <queries>0</queries>

44 <errors>0</errors>

45 <result_rows>0</result_rows>

46 <read_rows>0</read_rows>

47 <execution_time>0</execution_time>

48 </interval>

49 </default>

50 </quotas>

51</clickhouse>

密码用 sha256sum 处理过

可以这样生成:

1echo -n '你的密码' | sha256sum

运行

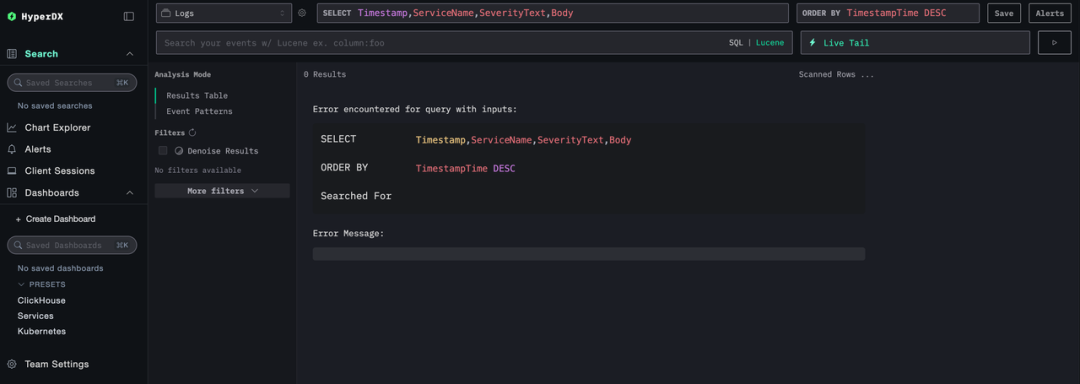

点击登录跳转至首页

点击登录跳转至首页

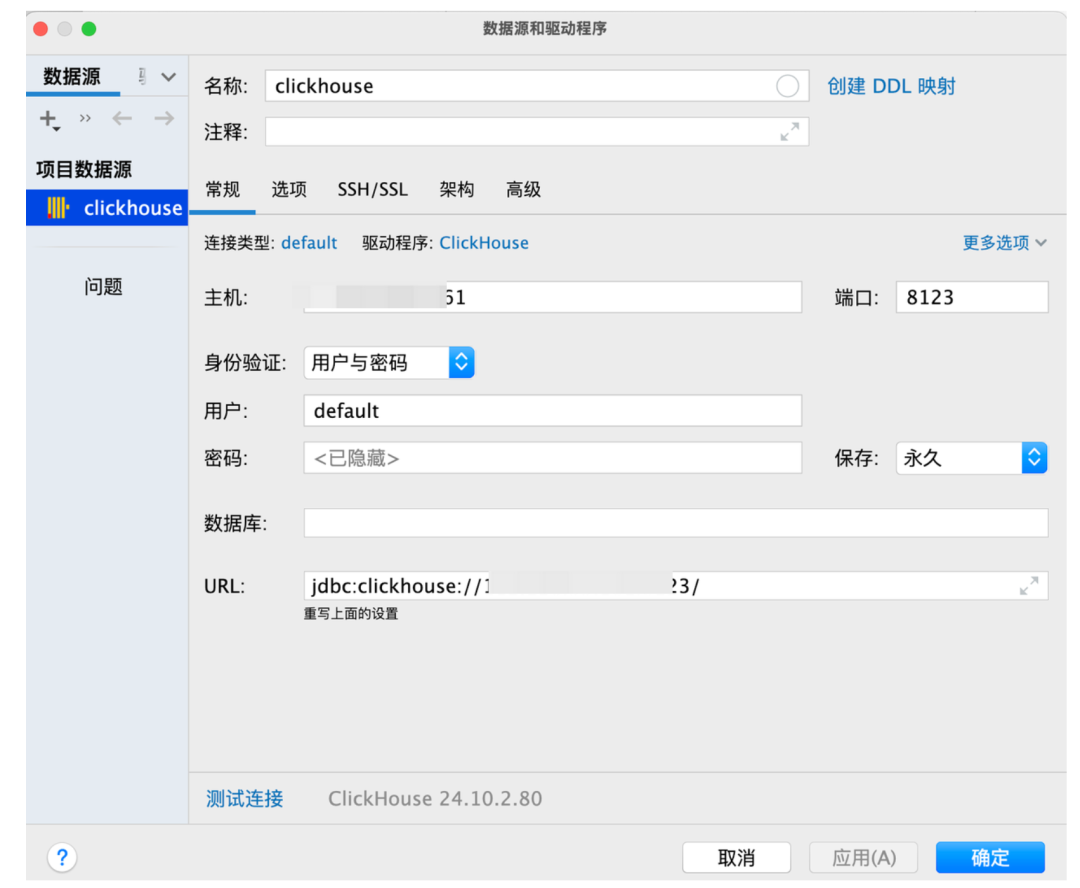

客户端连接

- 用户名:default

- 密码: 你的密码

- 端口:8123

数据库初始化

初始化 sql 脚本

1/* 1. 创建数据库(如已存在可先 DROP DATABASE IF EXISTS testdb) */

2CREATE DATABASE IF NOT EXISTS testdb;

3

4/* 2. 维度表:用户 */

5CREATE TABLE IF NOT EXISTS testdb.users (

6 user_id UInt32,

7 user_name String,

8 signup_date Date

9) ENGINE = MergeTree

10ORDER BY user_id;

11

12/* 3. 维度表:页面 */

13CREATE TABLE IF NOT EXISTS testdb.pages (

14 page_id UInt32,

15 page_url String,

16 category String

17) ENGINE = MergeTree

18ORDER BY page_id;

19

20/* 4. 事实表:页面访问日志 */

21CREATE TABLE IF NOT EXISTS testdb.pageviews (

22 event_date Date,

23 event_time DateTime,

24 user_id UInt32,

25 page_id UInt32,

26 duration UInt32 -- 停留秒数

27) ENGINE = MergeTree

28PARTITION BY toYYYYMM(event_date)

29ORDER BY (event_date, user_id, page_id);

30

31/* 5. 物化视图:每日 PV / UV 聚合 */

32CREATE MATERIALIZED VIEW IF NOT EXISTS testdb.pv_uv_daily

33ENGINE = SummingMergeTree

34PARTITION BY toYYYYMM(event_date)

35ORDER BY event_date

36AS

37SELECT

38 event_date,

39 count() AS pv,

40 uniqExact(user_id) AS uv

41FROM testdb.pageviews

42GROUP BY event_date;

43

44/* 6. 演示数据插入 ---------------------------------------- */

45

46/* 用户维度 */

47INSERT INTO testdb.users (user_id, user_name, signup_date) VALUES

48 (1, 'Alice', '2024-06-01'),

49 (2, 'Bob', '2024-07-15'),

50 (3, 'Cathy', '2024-11-30');

51

52/* 页面维度 */

53INSERT INTO testdb.pages (page_id, page_url, category) VALUES

54 (10, '/home', 'landing'),

55 (11, '/pricing', 'info'),

56 (12, '/blog', 'content');

57

58/* 页面访问日志 */

59INSERT INTO testdb.pageviews (event_date, event_time, user_id, page_id, duration) VALUES

60 ('2025-07-13', '2025-07-13 09:17:00', 1, 10, 35),

61 ('2025-07-13', '2025-07-13 09:18:07', 1, 11, 50),

62 ('2025-07-13', '2025-07-13 09:19:02', 2, 10, 15),

63 ('2025-07-14', '2025-07-14 10:03:45', 3, 12, 120),

64 ('2025-07-14', '2025-07-14 10:05:22', 1, 12, 90);

65

66/* 7. 快速验证 --------------------------------------------- */

67

68/* 查看当前数据库已创建的表 */

69SHOW TABLES FROM testdb;

70

71/* 查询物化视图结果 */

72SELECT * FROM testdb.pv_uv_daily ORDER BY event_date;

73

74/* 联表查询示例 */

75SELECT

76 u.user_name,

77 p.page_url,

78 v.event_time,

79 v.duration

80FROM testdb.pageviews AS v

81LEFT JOIN testdb.users AS u ON v.user_id = u.user_id

82LEFT JOIN testdb.pages AS p ON v.page_id = p.page_id

83ORDER BY v.event_time DESC;